Colin Haine

Technical Project Portfolio

Main projects

Hackathon projects

Spirob

A bio-inspired soft robotic arm modeled after an octopus tentacle for adaptive grasping. I procedurally generated its logarithmic-spiral geometry with mathematical modeling and custom code, then 3D-printed compliant segments with brushless motors, tendon actuation, and embedded IMUs for closed-loop control. I also built a MuJoCo simulation and trained the system with Soft Actor-Critic reinforcement learning to produce robust grasping behaviors.

Autoscout

A computer vision robot-tracking system for analyzing FRC matches at scale. It uses YOLO object detection, multi-camera calibration, homography alignment, and data association to track all six robots throughout a match. I built an automated pipeline that processes hundreds of matches and generates structured trajectory data for strategic analysis.

Clashbot

An AI system for the real-time strategy game Clash Royale used to study imitation learning and reinforcement learning for robotics. The model is pretrained on thousands of hours of gameplay using computer-vision-derived state-action data, then improved through self-play in a simulated environment, exploring multi-head action architectures and scalable training pipelines.

PillPall

PillPal is a smart pill box that reminds patients to take medication and uses an AI chatbot with speech-to-text and text-to-speech to record symptoms through conversation. The data is summarized and shared with doctors through a connected web dashboard for monitoring and prescription management. 1st Place Winner - LG Hacks 2.0.

CanvAI

A Chrome extension that organizes and prioritizes Canvas assignments using AI. It ranks tasks by importance and includes a chatbot that helps students quickly find assignments and resources. Using vector embeddings and retrieval-augmented generation (RAG), the system supports both metadata queries (e.g., due dates) and semantic search across assignment content. 2nd Place Winner - UHS Hacks

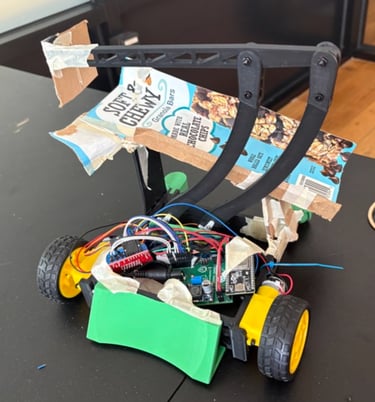

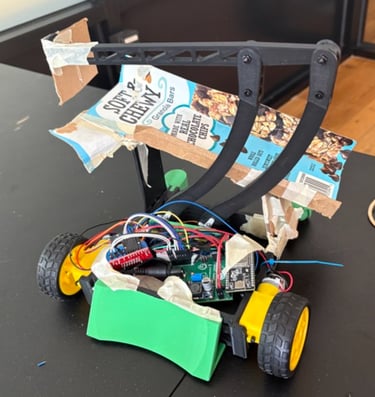

Kittenbot

A competition robot built during a 30-hour hardware/software hackathon. The robot uses a lightweight PLA drivebase and a four-bar linkage arm to collect and score game elements across the field. I programmed the control system on an ESP32 using ESP-NOW wireless communication to achieve fast, low-latency control of the robot’s drivebase and mechanisms. 2nd Place Winner - MakerHacks II

LSAPP Prediction

Analyzes smartphone app usage patterns to predict the next app a user will open. Using the LSAPP dataset of time-stamped app activity, we trained an LSTM model to learn sequential behavior patterns. The project also includes interactive data visualizations—such as usage dashboards and app transition networks—to explore behavioral trends across thousands of app events.

Song Popularity Analysis

A Random Forest model trained on 114,000 Spotify tracks to predict song popularity from audio features (e.g. danceability, loudness, tempo).

Data science projects

Movie clustering

Groups films by similarity using dimensionality reduction and K-Means clustering to build a system that recommends movies

Income & air quality

Uses ML and data visualization to analyze pollution sensor data and predict air quality levels from environmental measurements.

Robotics

Miscellaneous

FRC 2025 - Iron Panthers

As Manufacturing Lead for Team 5026 Iron Panthers, I coordinated fabrication and assembly for our 2025 robot, helping our team become FRC World Championship winners, by delivering parts quickly, maintaining shop equipment, and enabling rapid design iteration. I also improved team infrastructure by creating a manufacturing queue, tuning CNC processes, training new members, and developing AutoScout, a computer-vision system that analyzes robots from competition video feeds.

CNC training videos

Made a series of videos and documentation in order to build up the team's base of knowledge

Stepper motor robotic arm

An Arduino-controlled robotic arm driven by stepper motors that uses inverse kinematics to calculate precise positioning and generates smooth, coordinated motion trajectories.

Servo robotic arm

A 3-DOF robotic arm powered by Arduino-controlled stepper motors, enabling precise multi-axis movement and coordinated positioning for basic manipulation tasks.

McGraw Hill Overlay Killer

A Chrome extension that automatically detects and removes intrusive McGraw Hill popups and overlays, streamlining the user experience for uninterrupted studying, and attracting nearly 1000 users

Games

Pufferchase

Time Crystal

Survival School

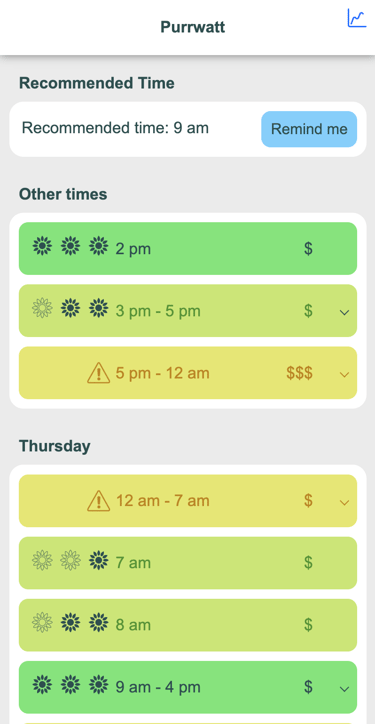

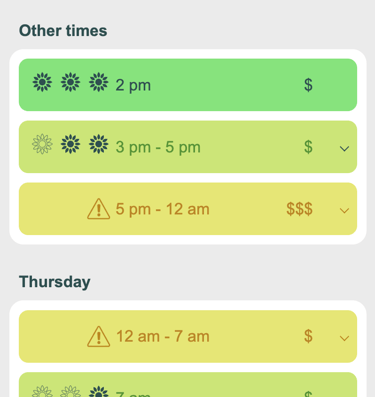

Purrwatt

Purrwatt is an intelligent energy management app that uses real-time CAISO grid and weather data to identify the cleanest and lowest-cost times to use electricity. It helps users shift their energy usage accordingly, reducing both expenses and carbon emissions, and was refined with feedback from professionals at CAISO and PG&E.

driven by curiosity about how intelligent systems can interact with the real world. He builds projects that combine robotics, machine learning, and software to explore how technology can sense, learn, and adapt.

I’m fascinated by how intelligence can emerge from code, data, and machines. Through robotics, AI, and experimental software projects, I explore how technology can learn from the world and respond to it.